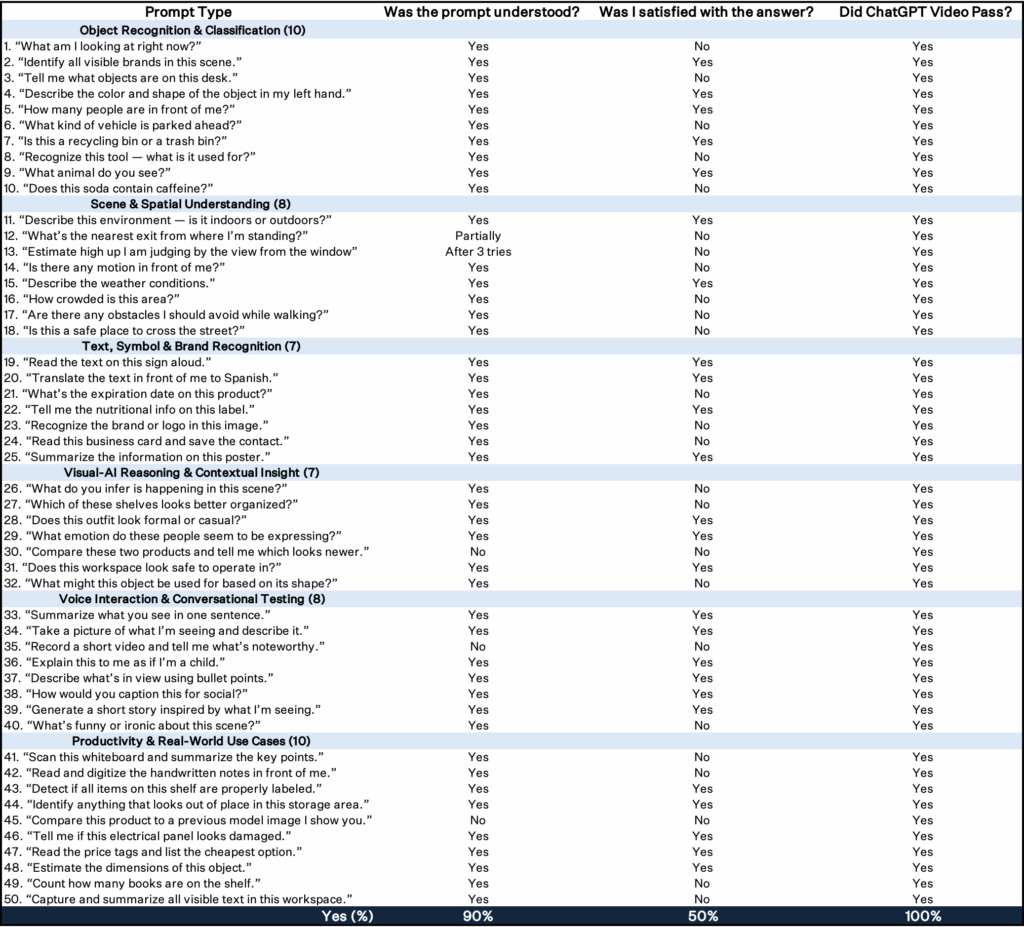

The Scoreboard: Meta AI vs ChatGPT

On November 13 we wrote that Meta’s new Display glasses will leave you impressed by the tech but short on reasons to use them. One of the key takeaways from that piece was blunt: Meta AI, a primary selling point, felt largely useless compared to ChatGPT or Grok. (See: Meta’s Wearables Have a Long Way To Go)

After that article, we set up a more structured side by side test: 50 prompts run through Meta AI on Meta Display, and the same 50 prompts run through ChatGPT’s video conversation tool on an iPhone. Across the prompts, Meta AI on the Display understood the request about 90% of the time, and I was satisfied with its answers only around half the time. ChatGPT, using its video model, understood 100% of the prompts and delivered satisfactory responses 98% of the time.

I found Meta AI is not just behind on edge cases, it struggles with basic comprehension which makes it broader line usefulness in everyday tasks. The one bright spot for Meta AI was foreign language translation.